Chen Shen (申晨)

Email: jason.sc@alibaba-inc.com / zjushenchen@gmail.com

Senior Algorithm Expert | Tongyi Lab

I received my Ph.D. and B.S. degrees from Zhejiang University in 2018 and 2012, respectively.

My research focuses on LLM post-training, bridging the gap between cutting-edge academic research and large-scale industrial deployment. My current interests include:

-

Reasoning & Agents: Enhancing model reasoning and agent capabilities through knowledge distillation and Reinforcement Learning.

-

Model Safety: Building LLM safety guardrails to intercept multi-dimensional risks—including content violations, prompt injection, jailbreak attacks, and model hallucinations—ensuring end-to-end security across the lifecycle, from AIGC to AI Agent operations.

![]()

![]() We are recruiting self-motivated interns with a strong LLM background. Please feel free to contact me via Email or WeChat.

We are recruiting self-motivated interns with a strong LLM background. Please feel free to contact me via Email or WeChat.

news

| Apr 07, 2026 | Two papers are accepted by ACL 2026. |

|---|---|

| Jan 26, 2026 | Three papers are accepted by ICLR 2026, including one ORAL. |

| Sep 25, 2025 | One paper is accepted by NeurIPS 2025. |

| Sep 04, 2025 | Two papers are accepted by EMNLP 2025, including one ORAL. |

| May 16, 2025 | One paper is accepted by ACL 2025. |

| Jan 23, 2025 | Two papers are accepted by ICLR 2025. |

| Jan 23, 2025 | Two papers are accepted by NAACL 2025, including one ORAL. |

| Sep 26, 2024 | One paper is accepted by NeurIPS 2024. |

| May 17, 2024 | One paper is accepted by KDD 2024. |

| May 02, 2024 | One paper is accepted by ICML 2024. |

featured projects

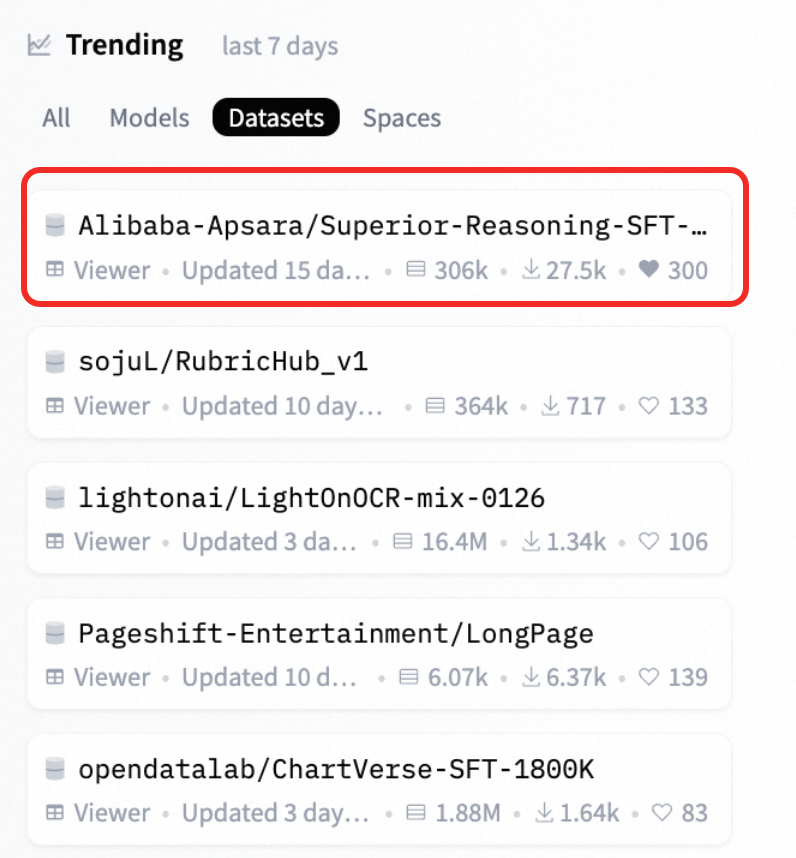

Distribution-Aligned Sequence Distillation for Superior Long-CoT Reasoning

We released the technical report "Distribution-Aligned Sequence Distillation for Superior Long-CoT Reasoning", along with two reasoning models trained on Qwen base (DASD-4B-Thinking / DASD-30B-A3B-Thinking-Preview) and the corresponding training data. Upon open-sourcing, this work received enthusiastic responses from the Hugging Face community. Our training data quickly topped the HuggingFace Dataset Trending leaderboard, ranking #1 for over ten consecutive days (Jan 20-31). The total downloads exceeded 70K within three weeks, with models and derived models accumulating over 20K downloads.

AI Safety Guardrail based on Tongyi-Skynet LLM

I led the R&D for the Tongyi-Skynet LLM-based AI Safety Guardrail project, which was honored as a 2025 Alibaba & Ant Group Outstanding Technical Project. My project was one of only three selected from Alibaba Cloud (Top 3).

On Alibaba Cloud, our model provides security protection for hundreds of millions of calls daily, covering multimodal scenarios (text, image, etc.) and addressing multi-dimensional risks such as content compliance and prompt injection. This helps cloud-based enterprises deploy high-availability, highly compliant AI application closed-loops.

selected publications

(*Corresponding Author or Project Lead)

- ICLRWhere Did This Sentence Come From? Tracing Provenance in LLM Reasoning DistillationICLR 2026, arXiv preprint arXiv:2512.20908, 2026

- ICLR OralHallucination Begins Where Saliency DropsICLR 2026 Oral, arXiv preprint arXiv:2601.20279, 2026

- ICLRDifferential Fine-Tuning Large Language Models Towards Better Diverse Reasoning AbilitiesICLR, 2026

- NeurIPSInstance-adaptive Zero-shot Chain-of-Thought PromptingIn Advances in Neural Information Processing Systems, 2024